Tl;dr

- AI Agents are LLM applications that interact with an environment multiple times without human intervention to achieve a specific task.

- Like autonomous driving, the promise of AI agents is a concept everyone can understand. As a result, many startups have emerged with agentic capabilities.

- While exciting, technical barriers exist, such as a lack of training data and compounding error rates, which prevent the widespread production and use of multi-turn LLM agents in the short term. Currently, success rates of the most effective web agents are at most 57%.

- Research and engineering efforts are underway and showing early promise to improve success rates and close the gap in performance.

- We are excited about the space and see opportunities in areas where there is an abundance of training data, efficiency in LLM interaction, and/or well-defined workflows to automate.

In March, we wrote about “agentic” AI. Since then, we’ve observed significant VC interest in startups with agentic capabilities. AI Agents are LLM applications that interact with an environment multiple times without human intervention to achieve a specific goal. Example use cases include scheduling meetings, processing customer service requests, making purchases online, or executing trades in financial markets. Agents are powerful tools for streamlining operations and enhancing efficiency in everyday life. The potential for this technology is immense and easy to understand. Still, AI agents face many technical challenges before fully delivering on their promises.

In this deep dive, we’ll explore what it takes to build AI Agents and the hurdles to bringing them into production, drawing on expert insights to assess their potential and limitations.

We Spoke to the Experts.

To better understand the technology, we consulted academics at Carnegie Mellon University’s School of Computer Science, where Managing Partner Phil Bronner is an alumnus and has been a steadfast investor in fellow alumni. We attended a fascinating conference on AI agents facilitated by Associate Professor of Computer Science and Chief Scientist of All Hands AI, Graham Neubig. This deep dive wouldn’t be possible without Graham and the other brilliant minds we’ve spoken to since. We encourage anyone interested in this space to follow these experts to stay on the cutting edge:

- Carnegie Mellon: Graham Neubig, Daniel Fried, Shuyan Zhou (Meta), Jing Yu Koh (Meta), Ruslan Salakhutdinov (Meta), Frank Xu, and more

- Ohio State: Yu Su

- Columbia University: Zhou “Jo” Yu

- University of Illinois: Heng Ji

- Microsoft: Rogerio Bonatti

Their work continues to push the boundaries of what AI can achieve, and their contributions are paving the way for the next generation of autonomous systems.

Defining AI Agents.

For this piece, when we discuss agents, we refer to multi-turn LLM agents, which are applications built atop large language models that interact with an environment multiple times without human intervention to achieve a specific goal. Unlike simpler AI systems, AI Agents navigate and solve problems in dynamic environments through iterative interactions.

The best example of agents currently exists in software development. These agents generate code, debug, and test themselves until the code accomplishes the task. Companies like Cognition Labs, All Hands, Replit, Factory, and others are working to address this opportunity.

The Journey from LLM to Agent:

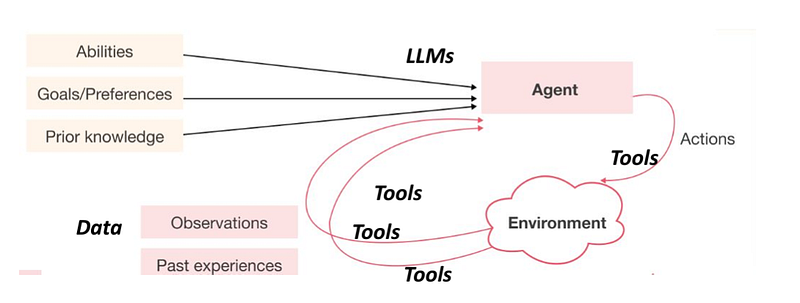

Knowledge, defined goals, and abilities form the basis for an agent’s decision-making and actions. The process can be outlined in a cyclical series of steps:

- Goal Initialization: The agent starts with a clear objective, using its knowledge and abilities to define its approach.

- Planning and Reasoning: Effective planning is crucial for breaking down complex tasks into manageable components. The agent uses arithmetic, commonsense, and symbolic reasoning to devise a viable approach. Techniques like Chain of Thought prompting help decompose functions into smaller, actionable steps.

- Perception: The agent gathers information from its environment, building situational awareness that informs its actions. This involves collecting relevant observations through sensory inputs or data sources.

- Tool Usability: The agent ensures it can effectively execute the planned actions by utilizing the appropriate tools. This involves selecting and applying tools to generate action calls, perform tasks in the environment, and feed new observations into the system. The complexity of tools ranges widely, supporting tasks from simple arithmetic calculations to complex API interactions.

- Action: Armed with a plan and environmental data, the agent executes tasks such as computations or API calls. The success of this phase depends on the agent’s interaction with external systems or resources.

- Observation: After taking action, the agent assesses the results by observing environmental changes. This feedback loop is essential for determining progress and making necessary adjustments.

- Reaction: If the goal isn’t achieved, the agent updates its approach based on new information and adjusts its actions. This iterative cycle of action, observation, and reaction continues until the goal is reached or capabilities are exhausted.

By integrating planning, perception, tool usability, action, observation, and reaction, an LLM becomes a functional agent capable of navigating complex tasks and adapting strategies over time.

Training LLM Agents

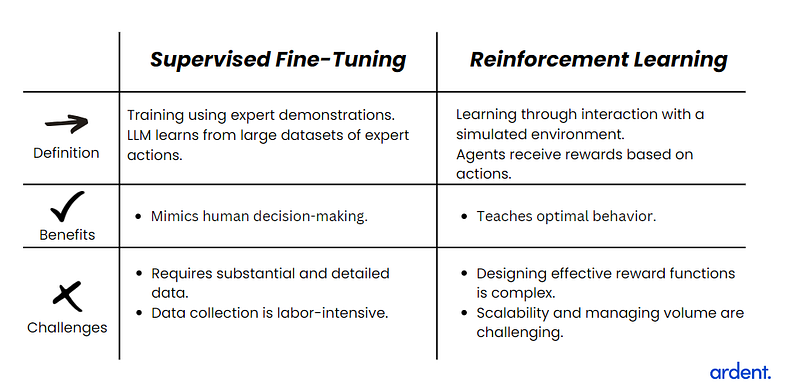

Training LLM agents for dynamic environments involves two main methods: Supervised Fine-Tuning and Reinforcement Learning. Each has distinct strengths and challenges.

Challenges in LLM Agent Production

Transitioning AI agents from the research phase to reliable tools involves significant challenges, including:

Lack of training data

One of the most pressing challenges is the need for high-quality, task-specific training data. Unlike broader AI systems, which can often leverage large, generalized datasets, AI agents require highly specialized data to learn and execute specific tasks within defined environments effectively. This becomes particularly challenging in scenarios where real-world examples are scarce or difficult to simulate. For instance, training an AI agent to perform complex, nuanced tasks in dynamic environments demands detailed, context-rich data that is often not readily available. The absence of such targeted data can lead to ineffective training, where agents either fail to generalize or exhibit suboptimal performance due to gaps in their learning. Issues of data privacy, high collection costs, and the inherent biases in available datasets compound this challenge.

Accuracy and Compounding Errors

It is a significant challenge to maintain accuracy throughout the sequence of actions required to complete a task. LLM agents operate in dynamic environments where each decision impacts subsequent steps. LLMs hallucinate. Given the number of LLM calls necessary to complete a task, minor errors compound, leading to flawed outcomes as the task progresses. High accuracy at every stage is essential to prevent these errors from escalating, and advanced reasoning is necessary for consistent decision-making, especially in unpredictable situations.

Nascent Space and Research Challenges

The development of LLM agents is still in its early stages, especially compared to more mature LLM applications like text generation or language translation. Given that this is a relatively new field, there is still much to learn about how best to train, deploy, and optimize these agents. Theoretical advancements must be matched with practical implementations, often revealing unforeseen difficulties.

Advancing Agent Performance: A multi-faceted approach

We are optimistic about the various efforts being made to enhance agent performance across multiple schools of thought. These advancements are primarily driven by two complementary approaches: academic research and practical engineering.

Research

Researchers from top universities and major tech companies are focusing on refining different components of the agent lifecycle. Their efforts include:

- Core LLM Enhancement: Improving the fundamental capabilities of Large Language Models.

- Task-Specific Model Development: Creating and fine-tuning dedicated models for individual tasks such as planning and perception.

- Agent-Centric Fine-Tuning: Tailoring models for specific agent use cases

- Specialized Training Data: Developing better datasets specifically designed for agent applications.

Engineering Innovations

In parallel, technical entrepreneurs and companies are taking a pragmatic approach to creating competent agents using available tools. Their strategy involves:

- Scaffolding: Implementing hard-coded steps for planning, reasoning, and tool selection, providing a clear structure for agents to follow.

- Supervisory Models: Employing additional models to review and refine the output of the primary LLM agent.

- RAG and Fine-Tuning: Utilizing Retrieval-Augmented Generation and domain-specific fine-tuning to ground agents in relevant contexts.

- Guardrails: Implementing both process and content safeguards to ensure reliable and appropriate agent behavior.

- Structured Output: Leveraging techniques like OpenAI’s structured outputs API to enhance the consistency and usability of agent responses.

Companies like Sierra are at the forefront of implementing these engineering techniques, demonstrating that significant improvements can be achieved through clever application of existing technologies (the company’s co-founder discusses this in-depth in this episode of the Training Data podcast.)

This dual approach of cutting-edge research and practical engineering is rapidly advancing the field of agent performance. As these efforts continue to converge, we anticipate seeing increasingly capable and reliable AI agents across a wide range of applications.

Key Areas of Opportunity for Agentic Applications

We are looking at three key factors that we believe make certain areas more conducive to the successful implementation of agentic applications in the shorter term:

Prime Domains for Agentic Applications

1. Code and Data Science

- Rich in training data with well-documented paths to success

- Clear success criteria, facilitating effective reward systems for agents

- Potential for significant productivity gains in software development and data analysis

2. Customer Success (with Limitations)

- Suitable for smaller, straightforward tasks

- Opportunity: Streamlining routine customer interactions and inquiries

- Challenges: Frequent LLM calls required, increasing risk of compounding errors

3. Next-Generation Agentic Process Automation (APA)

APAs, evolving from Robotic Process Automation (RPAs), are well-positioned to advance LLM agents due to:

- Pre-Defined Workflows: Leveraging existing RPA libraries to streamline agent integration

- Established Architecture: Utilizing low/no-code platforms and APIs for custom tool creation

- Customer Experience: Building on RPA’s track record in expanding automation efforts

Startups also have a significant opportunity to assist in improving agents’ performance and supporting their creation. For example, WebArena is a realistic environment designed for training and testing web agents. It provides a controlled setting where agents can interact with web-based systems, making developing and refining their capabilities for real-world web applications easier. Meanwhile, Microsoft recently released Windows Agent Arena, spearheaded by Rogerio Bonatti, which provides a robust benchmark for AI agents acting on Windows systems. With over 150 tasks across various applications, it will accelerate the development and evaluation of agents that can reason, plan, and act in a real OS environment. While a majority of today’s benchmarks take up to a week to compute in series on a single machine, one of the main innovations of Agent Arena is parallelization in Azure, which allows a full benchmark evaluation in less than 20 minutes. Sotopia offers interactive evaluation for social intelligence in language agents. The platform assesses how well agents handle social interactions and complex language tasks, which is crucial for applications requiring nuanced communication.

Conclusion

We’re excited about AI agents’ future, but there’s still significant work ahead. In VC, staying ahead means identifying goals that push the technical boundaries of what’s currently achievable.

While multi-modal agents remain a distant goal, they represent a crucial next step after which robots will further expand the potential of AI agents. The path forward is challenging, but that’s where the most transformative opportunities lie.

Thank you, especially to the experts who helped us put this together. If you are considering building multi-turn LLM agents, please reach out!